Table of Contents

- Why Most Technical Assessments Fail

- The Real Cost of Broken Interview Processes

- The Four-Stage Assessment Framework

- Stage One: Initial Technical Screen (The Filter)

- Stage Two: Practical Coding Assessment (The Reality Check)

- Stage Three: System Design and Problem-Solving (The Depth Test)

- Stage Four: Cultural and Communication Fit (The Integration Check)

- What’s Different in 2026: AI-Aware Assessments

- Common Mistakes That Drive Away Top Talent

- How to Adapt for Contractors vs Full-Time Hires

- Implementation Timeline and Checklist

- How HR Oasis Helps You Build Better Assessments

- Frequently Asked Questions

“The number one reason candidates fail coding interviews is not lack of knowledge, it’s jumping into code before understanding the problem.” – GeekBye Technical Interview Guide, 2026

Your best candidate just withdrew from your hiring process. Not because they failed the technical assessment. Because they decided your process was broken and didn’t want to work somewhere that evaluates talent this way.

This happens more than you think. A mid-level developer with 6 years of experience, strong GitHub contributions, and two solid references gets rejected because they couldn’t solve a binary tree puzzle in 45 minutes on a whiteboard. Meanwhile, a candidate who spent three months grinding LeetCode passes your assessment but can’t debug a real production issue to save their life.

Six months later, the LeetCode grinder is gone (couldn’t handle actual engineering work), and you’re still trying to backfill the role. Total cost of this broken process? North of $150,000 when you factor in recruiting fees, lost productivity, training time wasted, and the damage to your employer brand.

According to research analyzing technical hiring processes, 65 percent of developers now prefer hands-on practical assessments over traditional whiteboard interviews. Yet most companies still run interviews optimized for algorithm memorization rather than actual engineering judgment.

After working with hundreds of companies across Argentina, Mexico, Colombia, and broader Latam hiring both local employees and remote contractors, we’ve seen what separates effective technical assessments from theater. Here’s a complete framework for evaluating developers in a way that actually predicts job performance while respecting candidates’ time and expertise.

Why Most Technical Assessments Fail

Let’s start with why technical interviews are broken at so many companies.

Failure Mode One: Testing the Wrong Skills

Most technical assessments test isolated algorithmic problem-solving with no business context. It’s like evaluating an architect by having them build with Legos. Engineering in the real world demands understanding business requirements, balancing competing priorities, making thoughtful trade-offs.

A developer may write perfectly optimized code that solves the wrong problem entirely. Your assessment never catches this because it never asks them to clarify requirements or consider business constraints.

Failure Mode Two: Unrealistic Constraints

Companies set impossible time limits, expect perfect solutions, and create artificial pressure that has nothing to do with actual work. Then they wonder why production code is buggy and hard to maintain. You hired people who can perform under ridiculous constraints, not people who write quality code.

Failure Mode Three: No Real-World Context

Abstract puzzles divorced from actual work tell you almost nothing about how someone will perform. Can they debug a production issue? Review code effectively? Make architectural decisions with long-term implications? Design a system under real constraints? Your whiteboard problem doesn’t answer any of this.

Failure Mode Four: Broken Signal-to-Noise Ratio

You spend hours having senior engineers conduct algorithmic assessments that they know don’t reflect real work. Meanwhile, actual development falls behind. The morale impact is real. Senior developers watch qualified candidates get rejected while algorithm-crammers make it through, and they lose faith in the hiring process.

The Real Cost of Broken Interview Processes

The price of flawed technical assessment extends far beyond missed hires.

Direct Costs:

- Recruiting fees for wrong hires: $30,000 to $50,000 per bad senior hire

- Training and onboarding wasted: $15,000 to $25,000

- Severance and replacement recruiting: another $30,000 to $50,000

Indirect Costs:

- Bugs introduced into production

- Technical debt from poor architectural decisions

- Delayed features and missed milestones

- Time senior engineers spend fixing problems

- Morale damage to existing team

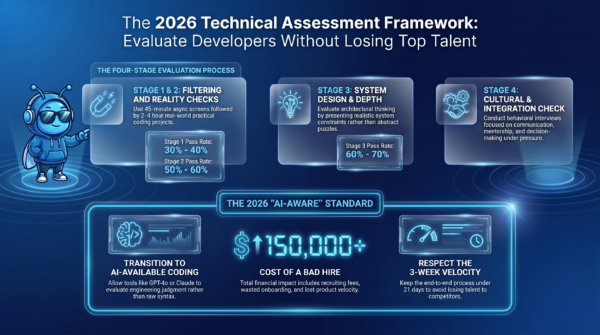

Total cost of a bad senior developer hire can exceed $150,000 when all factors are considered, according to industry analysis. And that doesn’t include the opportunity cost of the seat staying empty for months while you run a broken process.

The Retention Impact:

Empty seats cost more than salaries. Every open position means existing developers carry extra load, which accelerates burnout and creates more turnover. This compounds. You lose one developer, can’t fill the role efficiently, two more leave because they’re overwhelmed, now you have three open roles with a broken hiring process.

The Four-Stage Assessment Framework

Here’s a structured framework that actually works for evaluating technical talent without losing top candidates.

Stage One: Initial Technical Screen (The Filter)

Purpose: Verify baseline technical competency without wasting candidate or team time.

Format: 30 to 45 minute async coding assessment or structured technical phone screen.

What to test:

- Can they write clean, working code?

- Do they understand fundamental concepts for their level?

- Can they debug a simple issue?

- Do they ask clarifying questions before coding?

Implementation:

Use a platform like HackerRank, CodeSignal, or Codility for consistency. Keep it time-boxed (45 to 60 minutes maximum). Focus on 2 to 3 problems that mirror real work, not abstract puzzles.

Critical rule: Set a hard time limit per problem. Candidates burn 50 minutes on problem two, leave problem three untouched, and fail not because they can’t code but because they can’t manage time under artificial pressure. Fix this by making time management explicit. “You have 25 minutes per problem. If you’re not making progress at 25 minutes, move to the next problem and come back if time permits.”

What success looks like:

Candidate submits working solutions to at least 2 of 3 problems. Code is readable with reasonable variable names. They handle basic edge cases. They demonstrate they can think through a problem before writing code.

Red flags:

Can’t produce working code in reasonable time. Doesn’t handle any edge cases. Code is completely unreadable. Doesn’t ask any clarifying questions on ambiguous problems.

Pass rate target: 30 to 40 percent of applicants. If you’re passing 70 percent, your screen is too easy. If you’re passing 10 percent, it’s too hard or testing the wrong things.

Stage Two: Practical Coding Assessment (The Reality Check)

Purpose: Evaluate how they approach real-world problems similar to actual work.

Format: 2 to 4 hour take-home project or 60 to 90 minute pair programming session.

What to test:

- Can they work with existing codebases?

- Do they write maintainable code?

- How do they handle ambiguity and incomplete requirements?

- Can they balance speed with quality?

- Do they communicate their thinking clearly?

Implementation:

Take-Home Option: Give them a realistic scenario that mirrors your actual work. Make the brief crystal clear, with explicit deliverables and time expectations. “This should take 3 to 4 hours. We’re evaluating code quality, testing approach, and how you handle ambiguous requirements.”

Keep it general and skill-based. If it’s highly specific to your company’s product or feels like real unpaid work, that’s a red flag for candidates and they’ll withdraw.

According to research, developers rate take-home assessments as the most effective way to demonstrate their skills, but they also rate irrelevant or poorly briefed tech assessments as a top pet peeve in hiring processes. The difference is preparation and relevance.

Pair Programming Option: Give them a partially implemented feature or a buggy codebase. Have them extend functionality or debug the issue while thinking aloud. This lets you see their process, communication style, and how they handle being observed.

What success looks like:

Candidate delivers working code that solves the core problem. Code is clean, readable, and demonstrates good judgment about trade-offs. They handle basic edge cases. If stuck, they ask smart questions. They communicate their reasoning if it’s pair programming.

Red flags:

Delivers code that doesn’t run. Ignores the requirements entirely. Takes 12 hours on what should be 3 hour project (can’t prioritize or make trade-offs). In pair programming, goes completely silent and can’t explain their thinking.

Pass rate target: 50 to 60 percent of candidates who reach this stage. You’ve already filtered in stage one, so most candidates here should be baseline competent.

Stage Three: System Design and Problem-Solving (The Depth Test)

Purpose: Evaluate architectural thinking, trade-off analysis, and depth of experience.

Format: 45 to 60 minute system design discussion or technical deep-dive.

What to test:

- Can they design systems under realistic constraints?

- Do they understand trade-offs between different approaches?

- How do they handle uncertainty and incomplete information?

- Can they explain complex technical concepts clearly?

- Do they think about scalability, maintainability, cost?

Implementation:

For Senior+ Engineers: Present a realistic system design problem with explicit constraints. “Design a notification system that handles 100,000 daily active users. You have a $5,000 monthly budget. Notifications need 99 percent delivery rate but latency up to 30 seconds is acceptable.”

Don’t ask them to design Twitter in 45 minutes. That’s nonsense. Focus on one or two architectural decisions under realistic constraints like rate limits, partial failures, cost ceilings, or rollout safety.

For Mid-Level Engineers: Focus on smaller-scale design decisions or extending existing systems. “Here’s our current API structure. We need to add rate limiting. Walk me through your approach, what you’d consider, what trade-offs you’d make.”

For Junior Engineers: Skip formal system design. Instead, do a technical deep-dive on something from their experience or a technical concept relevant to the role. The goal is evaluating their ability to explain technical topics clearly, not test knowledge they don’t have yet.

What success looks like:

Candidate asks clarifying questions before jumping into solutions. They consider multiple approaches and explain trade-offs. They think about failure modes, not just happy paths. They can explain their reasoning clearly to both technical and non-technical audiences.

Red flags:

Jumps immediately to solution without understanding requirements. Can’t explain trade-offs between different approaches. Designs something wildly over-engineered with no justification. In technical deep-dives, can’t explain concepts they claim to know.

Pass rate target: 60 to 70 percent. Most candidates reaching stage three should pass this if your earlier stages worked.

Stage Four: Cultural and Communication Fit (The Integration Check)

Purpose: Evaluate whether they’ll work effectively with your team and company culture.

Format: 30 to 45 minute behavioral interview, increasingly important in 2026.

What to test:

- How do they communicate during incidents?

- How do they handle disagreement or feedback?

- How do they approach mentoring or being mentored?

- How do they make decisions under pressure?

- Do their values align with your team’s way of working?

Implementation:

Use STAR method (Situation, Task, Action, Result) questions focused on real scenarios they’ll face. Don’t ask generic questions like “what’s your greatest weakness.” Ask specific behavioral questions:

“Tell me about a time you had to debug a production issue under time pressure. What was your process?”

“Describe a situation where you disagreed with a technical decision your team was making. How did you handle it?”

“Walk me through a time you had to explain a complex technical concept to a non-technical stakeholder.”

According to technical interview evolution research, behavioral rounds now comprise 30 to 40 percent of total interview time at major tech companies, up from 10 to 15 percent five years ago. This reflects the reality that technical skills without communication ability and cultural fit creates problems.

What success looks like:

Candidate provides specific examples (not theoretical answers). They demonstrate self-awareness about strengths and growth areas. They show they can communicate effectively across technical levels. They handle feedback constructively.

Red flags:

Can’t provide concrete examples from their experience. Blames others for every failure or conflict. Shows no self-awareness. Communication style is extremely poor for someone at their level.

Pass rate target: 70 to 80 percent. If someone made it through your technical stages, most should pass cultural fit unless there’s a significant values misalignment or communication gap.

What’s Different in 2026: AI-Aware Assessments

The biggest shift in technical assessment in 2026 is AI-aware evaluation. Meta piloted AI-available coding rounds in late 2025, providing GPT-4o, Claude Sonnet, and Gemini 2.5 Pro to candidates during problems. This is now rolling out across FAANG and mid-size tech companies.

Why this matters:

If you’ve been preparing your interview process as though AI tools are banned, you’re evaluating for the wrong skills. Baseline coding tasks are easily AI-assisted now. What matters is whether engineers use AI fluently, not whether they refuse to use it or use it blindly.

How to adapt your assessment:

Allow candidates to use AI tools during coding assessments. What you’re evaluating changes from “can they write this code” to “can they direct AI effectively, verify output, identify errors, and explain their process.”

Practice this yourself before interviewing. Use GitHub Copilot or Claude in a coding environment with a timer running. Give yourself a real problem, use AI to help, then critically review everything it produces before accepting it.

The evaluation criteria become: Do they prompt AI effectively? Do they catch AI errors? Do they explain their usage clearly? Can they debug AI-generated code?

What this looks like in practice:

Instead of watching someone write a sorting algorithm from scratch, you watch them use AI to generate it, then debug when the AI makes an off-by-one error, then explain why they chose that approach over alternatives AI suggested.

The signal you’re looking for is engineering judgment applied to AI-assisted work, not raw coding ability in isolation.

Common Mistakes That Drive Away Top Talent

Here are the patterns that make strong candidates withdraw from your process.

Mistake One: Excessive Time Investment

Asking candidates to spend 8-plus hours on take-home projects or go through 6-plus interview rounds. Top talent is interviewing at multiple companies. If your process takes 3 weeks and competitors move in 1 week, you lose candidates to speed.

Mistake Two: Irrelevant Assessments

Testing skills they’ll never use in the actual role. If your work is web development with React, why are you testing advanced algorithms? If they’ll never touch systems programming, why test C++ memory management?

Mistake Three: Disrespectful Treatment

Not providing feedback. Ghosting after final rounds. Making candidates wait weeks between stages. Rescheduling interviews multiple times. Strong developers have options. Disrespect signals what working at your company would be like.

Mistake Four: No Real-World Context

Abstract puzzles divorced from actual work. Trick questions designed to make candidates fail. Brain teasers with no technical relevance. This signals your engineering team doesn’t understand what actually matters.

Mistake Five: Inconsistent Evaluation

Different interviewers assess different things with different standards. No rubric. No clear pass/fail criteria. Candidates get rejected for reasons that have nothing to do with job performance.

Mistake Six: Ignoring Communication Entirely

Focusing only on whether code works, not whether candidate can explain their thinking, collaborate effectively, or communicate technical concepts. You hire someone who can code but can’t work with your team.

How to Adapt for Contractors vs Full-Time Hires

One important note: interview processes for contract roles versus full-time positions have converged significantly in 2026.

The Old Pattern: Contract interviews were shorter and less rigorous. The assumption was “we can end the contract if it doesn’t work out, so lower the bar.”

The New Reality: According to career coaching research on 2026 technical interviews, contractor interview processes are now the same or strikingly similar to full-time processes, especially when there’s possibility the contract converts to full-time.

Why this changed:

Companies now have more leverage in the market post-2023 tech correction. They’re making contractors go through the same multi-step process as full-time hires because they can.

Also, many contractors are evaluating companies just as carefully as full-time candidates. They want to know if the work is interesting, if the team is competent, if the environment is functional. A thorough process signals you take work seriously.

What this means practically:

Use the same four-stage framework for both contractors and full-time hires. Adjust slightly for contract duration (a 3-month contract might skip some cultural deep-dive, a 12-month contract-to-hire should go through everything).

The one caution: for take-home assessments, make absolutely sure it’s general and skill-based. If it’s highly specific to your company’s product or feels like real unpaid work, that’s a red flag especially for contractors who are often doing this while working another contract.

Implementation Timeline and Checklist

Here’s how to actually implement this framework at your company.

Week One: Audit Current Process

Document your current technical assessment end-to-end. What stages exist? Who’s involved? What are you actually testing at each stage? What’s your pass rate at each stage? What’s time-to-hire? What’s offer acceptance rate?

Talk to recent hires about their experience. What worked? What felt broken? What almost made them withdraw?

Week Two: Design New Framework

Map your needs to the four-stage framework. What does success look like at each stage for your specific roles? What are you testing? What’s the format?

Create evaluation rubrics for each stage. What are the specific criteria? How do you score candidates consistently?

Week Three: Build or Source Assessments

For Stage One, choose a platform (HackerRank, CodeSignal, Codility) or build custom screeners.

For Stage Two, create 2 to 3 take-home project templates that mirror real work. Brief them clearly. Test them on current engineers to calibrate time.

For Stage Three, prepare system design scenarios or technical deep-dive questions with evaluation criteria.

For Stage Four, prepare behavioral questions mapped to your actual cultural values and work scenarios.

Week Four: Train Your Interviewers

Your framework is only as good as execution. Train everyone involved in interviewing on what you’re testing, how to evaluate it, how to provide a good candidate experience.

Practice on each other. Run mock interviews. Calibrate scoring so you’re consistent.

Week Five: Pilot

Run the new process with 5 to 10 candidates. Collect feedback from both interviewers and candidates. What worked? What felt awkward? What needs adjustment?

Week Six: Iterate and Roll Out

Adjust based on pilot feedback. Document everything. Roll out to your full hiring process.

Set metrics: time-to-hire, candidate satisfaction, offer acceptance rate, quality of hire at 90 days, retention at 12 months. Track these to know if your process actually improved.

How HR Oasis Helps You Build Better Assessments

At HR Oasis, we don’t just find developers for you. We help you build technical assessment processes that identify real talent without driving candidates away.

Here’s what that looks like:

Assessment Design: We work with your technical team to create evaluation frameworks tailored to your actual work, not generic algorithm tests. This includes realistic coding scenarios, appropriate system design problems for the level, and behavioral questions that matter for your culture.

Pre-Vetted Talent: When you work with us for hiring, candidates have already gone through technical assessment before they reach you. You’re interviewing people who can definitely do the work, so your process can focus on fit and depth rather than basic screening.

Process Consulting: We review your current technical interview process, identify what’s driving candidates away, and help you build something better. This includes interviewer training, rubric development, and candidate experience optimization.

Market Context: We help you understand what assessment processes competitors use, what candidates expect in Argentine and broader Latam markets, and how to structure evaluation that respects local professional norms while maintaining your quality bar.

Continuous Improvement: As your team grows and your needs change, we help you evolve your assessment process. What works for hiring your fifth engineer won’t work for hiring your fiftieth.

The result is you spend less time on broken assessment theater and more time identifying engineers who’ll actually strengthen your team.

Ready to fix your technical interview process?

📩 Let’s talk: info@hroasis.com

We’ll review your current process, identify what’s working and what’s driving candidates away, and help you build an assessment framework that actually predicts job performance.

Related Articles

- Why CTOs Scale Teams Fast: Hiring Bottlenecks 2026

- Latin America Developer Salaries 2026: Complete Guide

- Developer Retention 2026: Why Engineers Quit

- Engineering Culture: Distributed Latam Teams

Frequently Asked Questions

What’s the biggest mistake companies make in technical assessments?

Testing isolated algorithmic skills without business context. Most technical assessments test whether candidates can solve abstract puzzles under time pressure, not whether they can debug production issues, make architectural trade-offs, write maintainable code, or collaborate effectively. This leads to hiring people who are good at interviews but struggle with actual engineering work. The fix is shifting to assessments that mirror real work: working with existing codebases, debugging real scenarios, designing systems under realistic constraints, and evaluating both technical skills and communication ability.

How long should a technical assessment process take?

Total time from application to offer should be 2 to 3 weeks maximum for most engineering roles. This breaks down as Stage One initial screen (45 to 60 minutes async), Stage Two practical assessment (2 to 4 hours take-home or 90 minutes pair programming), Stage Three system design or deep-dive (45 to 60 minutes), Stage Four cultural fit (30 to 45 minutes). If your process takes 6-plus weeks or requires 8-plus hours of candidate time, you’re losing top talent to competitors who move faster. Speed matters because strong candidates are interviewing at multiple companies simultaneously.

Should contractors go through the same assessment as full-time hires?

Yes, in 2026 contractor interview processes have converged with full-time processes. Companies now make contractors go through the same multi-step evaluation, especially when there’s possibility of conversion to full-time. This reflects increased leverage in the market and recognition that contractors need to integrate effectively with teams. The slight adjustment is for very short contracts (under 3 months) you might streamline cultural deep-dive, but technical evaluation should be equally rigorous. One caution: ensure take-home projects are general and skill-based, not unpaid work specific to your product.

What’s changed with AI-aware technical assessments in 2026?

The biggest shift is allowing candidates to use AI tools (GPT-4o, Claude, GitHub Copilot) during coding assessments. Meta piloted this in late 2025 and it’s rolling out across tech companies. Evaluation criteria changed from “can they write this code from scratch” to “can they direct AI effectively, verify output, identify errors, and explain their process.” Engineering judgment applied to AI-assisted work is now the signal, not raw coding ability in isolation. If your process bans AI tools, you’re testing for skills that don’t match how modern development actually works.

How do you avoid losing top talent during the assessment process?

Respect candidates’ time by keeping total investment under 6 to 8 hours and moving quickly between stages. Test relevant skills that mirror actual work, not abstract puzzles. Provide clear expectations at each stage with transparent evaluation criteria. Give feedback regardless of outcome. Treat candidates professionally throughout, no ghosting or endless rescheduling. Make the process feel like a glimpse of what working at your company would be like. Top talent has options; if your process is disrespectful or irrelevant, they’ll withdraw and accept offers elsewhere.

What’s a good pass rate at each assessment stage?

Stage One initial screen should pass 30 to 40 percent of applicants. If you’re passing 70 percent, it’s too easy. If you’re passing 10 percent, it’s too hard or testing wrong things. Stage Two practical assessment should pass 50 to 60 percent since you’ve already filtered. Stage Three system design should pass 60 to 70 percent. Stage Four cultural fit should pass 70 to 80 percent since technical competence is established. If your overall funnel passes less than 5 percent of applicants through all stages, your process is likely too stringent and you’re rejecting qualified candidates.

How do you evaluate communication skills in technical interviews?

Communication evaluation happens throughout the process, not just in behavioral rounds. In pair programming, listen to how they explain their thinking. In system design, assess whether they can articulate trade-offs clearly to both technical and non-technical audiences. In take-home projects, evaluate code readability and documentation. In behavioral rounds, use STAR questions about specific scenarios like explaining technical concepts to stakeholders or handling disagreements. According to research, behavioral rounds now comprise 30 to 40 percent of interview time, up from 10 to 15 percent, reflecting that technical skills without communication ability creates team problems.

What are the biggest red flags in a candidate’s technical assessment?

Major red flags include jumping into code without clarifying requirements (shows they don’t understand problem-solving), delivering code that doesn’t run or ignoring requirements entirely (lack of basic competence), inability to explain their technical decisions (can’t articulate reasoning), going completely silent during pair programming (communication gap), blaming others for every failure in behavioral questions (no self-awareness), and taking 12-plus hours on what should be a 3-hour project without acknowledging it (can’t prioritize or make trade-offs). One or two minor issues are normal, but multiple major red flags indicate a poor fit.

How do you make technical assessments fair for remote candidates?

Use async assessments for initial screening so timezone doesn’t matter. For live sessions, offer flexible scheduling across timezones. Ensure all candidates use the same tools and platforms so there’s no advantage to being in-office. Allow use of documentation and tools just like real work, no artificial constraints. Provide clear written instructions for all assessments, not just verbal. Record pair programming sessions so you can review if connection issues occur. Treat remote candidates exactly as you’d treat local candidates, the format might differ but rigor and respect should be identical.

What’s the ROI of fixing a broken technical interview process?

Direct financial impact includes reducing cost per hire by 30 to 40 percent through faster time-to-fill and better hit rates, decreasing turnover by 40 percent through better role fit, and avoiding the $150,000-plus cost of bad senior hires. Indirect benefits include improved employer brand as word spreads about your fair process, higher offer acceptance rates (candidates want to work somewhere that evaluates talent well), better team morale since senior engineers aren’t wasting time on broken assessment theater, and faster product velocity from having the right people in seats. Companies that invest in fixing technical assessment typically see ROI within 6 to 9 months.